Research

From Intelligence to Utility: A Framework for the Spectrum of Human-AI Augmentation

The Shift from Cognitive Benchmarking to Operational Utility

The contemporary discourse surrounding artificial intelligence is characterized by a persistent fixation on intelligence as an abstract, measurable quantity, often divorced from the pragmatic realities of human labor and institutional integration. Historically, the evaluation of AI has been anchored in benchmarks, such as standardized test performance, image recognition accuracy, and the pursuit of Artificial General Intelligence (AGI). However, as these systems migrate from laboratory environments into high-stakes professional domains, a critical disconnect has emerged. The future of AI will not be decided by the internal cognitive depth of the models, but by their external utility. This shift necessitates a transition from binary assessments, human versus machine, to a nuanced understanding of the Human-AI Augmentation Spectrum, where value is derived from the alignment of machine capabilities with human expertise across varying levels of problem dimensionality.

Artificial General Intelligence (AGI) is often portrayed as a singular, looming milestone. Projections based on the current scaling of compute, which is increasing by approximately 0.5 orders of magnitude (OOMs) per year, suggest that human-level performance across a broad range of cognitive tasks may be plausible as early as 2027.1, 2 This "Intelligence Explosion" narrative, popularized by recent insider perspectives, suggests that the qualitative jump from current models to those capable of outperforming doctoral-level experts is a matter of cumulative algorithmic efficiency and compute investment.1 Yet, this intelligence remains "potential energy" until it is converted into the "kinetic energy" of real-world value through context-specific adaptation and deployment. An AGI that lacks the ability to act meaningfully in the physical world or within the intricate logic of established professions remains a powerful but inert substrate.

To resolve this paradox, it is essential to move beyond raw intelligence metrics and focus on "Problem Dimensionality" — defined here as the level of specialization and cognitive computation a human requires to complete a specific task. This framework allows for a multi-stage taxonomy that distinguishes between automation that replicates human effort and discovery that extends it. The true threshold of AI transformation is not found in the replication of the human mind, but in the ability of artificial systems to navigate high-dimensional spaces that are biologically inaccessible to the human brain.3, 4

The Three-Stage Taxonomy of Human-AI Augmentation

The Human-AI Augmentation Spectrum provides an interpretive lens to situating AI systems relative to human expertise rather than arbitrary performance metrics. It distinguishes between systems that assist, those that augment, and those that extend cognition beyond human capacity.

Table 1. The Three-Stage Taxonomy of Human-AI Augmentation

Component | Stage 1: Non-Expert Cognition | Stage 2: Expert-Level Cognition | Stage 3: Beyond-Expert Cognition |

Cognitive Task | Routine administrative tasks; basic perception. | Domain mastery; pattern replication. | High-dimensional discovery; novel synthesis. |

Role of AI | General Adjunct / Assistant. | Specialized Augmentor. | Discovery Engine / Orchestrator. |

Human Role | Task Delegation / Oversighter. | Verification / Double-Checking. | Systems Pilot / Diagnostic Strategist. |

Value Basis | Labor cost reduction; time savings. | Knowledge amplification; replication. | Previously unreachable outcomes; novelty. |

Risk Profile | Low-stakes errors. | Redundancy Wall; deskilling risks. | Agency misalignment; structural change. |

Table 1. The Three-Stage Taxonomy of Human-AI Augmentation outlines the evolution of professional AI integration across three distinct stages of capability.

Stage 1: Non-Expert Cognition and General Automation

Stage 1 is characterized by low Problem Dimensionality, involving tasks that require routine perception or administrative coordination. These are the baseline cognitive functions that, while necessary for organizational flow, do not define high-level professional expertise. AI systems at this stage function as adjuncts, performing duties such as scheduling, basic transcription, and simple anomaly detection.5 In the context of the workforce, this stage represents the automation of "common" tasks to reduce immediate labor expenditure. Organizations frequently adopt these tools to achieve modest time savings, yet the impact on fundamental productivity remains limited by the nature of the tasks themselves.6

Stage 2: Expert-Level Cognition and Specialized Augmentation

Stage 2 involves tasks where AI systems replicate or mimic the judgment of human experts. This is the domain of pattern recognition and knowledge amplification, where models are trained to perform at a level comparable to a seasoned professional. Examples include vision transformers capable of identifying fractures on radiographs or Large Language Models (LLMs) that can pass the bar exam.1, 7 While these systems exhibit high intelligence, they are frequently hindered by the "Redundancy Wall" — a state where the human expert must still verify every machine-generated output to manage clinical, legal, and contextual risks.3 This creates a procedural bottleneck where the organization effectively pays for a "double expert" system, neutralizing the intended return on investment (ROI).8

Stage 3: Beyond-Expert Cognition and Dimensional Discovery

Stage 3 represents the frontier of the augmentation spectrum, where Advanced Machine Intelligence (AMI) performs tasks that are biologically impossible for a human expert to replicate. This is not intelligence imitation, but intelligence amplification. Stage 3 systems, such as AlphaFold for protein structure prediction or Radiomics engines for virtual biopsies, extract insights from billions of data points that exceed the human brain's visual and cognitive processing limits.9, 10, 11 In this stage, utility is derived from Frontier Discovery — solving problems that were previously unreachable through human effort alone.2, 10

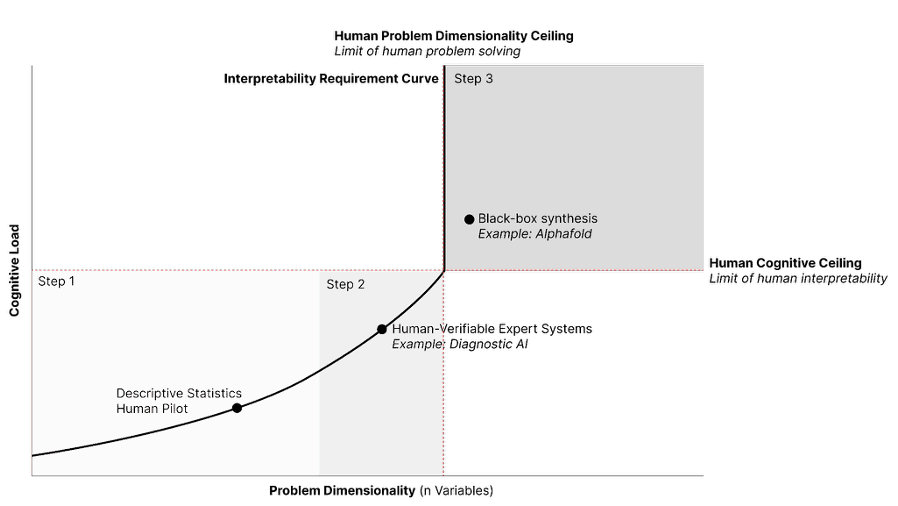

Figure 1. The Intelligence Frontier and the Spectrum of Cognitive Augmentation.

Figure 1, The Intelligence Frontier and the Spectrum of Cognitive Augmentation. This schematic illustrates the epistemic relationship between problem complexity and human interpretability.

Axis Definitions: The x-axis represents Problem Dimensionality (n variables), or the scale of data points and interdependencies within a system. The y-axis represents Cognitive Load, defined as the mental synthesis capacity required to mechanistically interpret the underlying logic of a solution.

The Interpretability Requirement Curve: This function maps the exponential increase in cognitive demand as dimensionality grows. The system operates efficiently along this curve, whereas the surrounding quadrants represent either unnecessary obfuscation (upper-left) or biological impossibility (lower-right).

Biological Thresholds: The horizontal Human Cognitive Ceiling marks the fixed limit of human working memory and synthesis. The vertical Human Problem Dimensionality Ceiling defines the maximum number of variables manageable by human-led heuristics.

The Pilot Zone (A, B): The shaded region identifies the regime where a human remains the System’s Pilot. This includes Descriptive Statistics (high transparency) and Diagnostic AI/Expert Systems (verifiable heuristics), where the cognitive load remains below the biological ceiling.

The Intelligence Frontier (C): Problems of extreme dimensionality, such as Black-box synthesis (e.g., AlphaFold), reside in a regime where the required cognitive load exceeds the human ceiling. In this region, AI acts as an "Oracle," providing high-utility, verifiable outcomes that are nonetheless mechanistically opaque to human observers.

The Radiology Utility Paradox: Navigating the Redundancy Wall

Radiology offers a profound historical case study in the gap between intelligence and utility. In 2016, Geoffrey Hinton suggested that the rapid advancement of convolutional neural networks (CNNs) in image recognition rendered the training of radiologists obsolete. However, nearly a decade later, the profession has not only survived but is adapting to the integration of over 400 FDA-approved algorithms.13 The failure of the "end of radiology" prophecy lies in the misunderstanding of how intelligence translates into clinical utility.

The primary obstacle in radiology is the "Redundancy Wall." When AI is deployed to detect common findings like lung nodules or fractures, it is operating at Stage 2. Because the radiologist maintains ultimate legal and clinical responsibility, they cannot simply accept the machine's finding; they must personally inspect the scan to verify the machine's accuracy and ensure no other pathologies are present. This "second pair of eyes" model often adds to the cognitive load rather than reducing it, as the radiologist must now manage both the primary image and the machine's interpretation.3, 14

Orchestration and the Rise of Multi-Agent Systems

True utility in radiology, and by extension, other high-stakes fields, emerges when the focus shifts from single-point intelligence to orchestration through Multi-Agent AI Systems. In this paradigm, a suite of specialized agents handles different facets of the diagnostic workflow under the strategic supervision of a human "Systems Pilot".15, 16

1. Triage Agents: These systems evaluate study requisitions and imaging data in real-time to prioritize high-urgency cases, such as strokes, reducing time-to-treatment from hours to minutes.14, 17

2. Image Quality Agents: These agents assess scans for motion artifacts or positioning errors as they are acquired, allowing technologists to rectify issues immediately without recalling the patient.18

3. Diagnostic Systems: Diagnostic Convolutional Neural Networks (CNNs) or Vision Language Models (VLM) provide expert-level image interpretation, acting as autonomous systems or adjunct clinical decision support (CDS) tools for the Radiologist.

4. Radiology Reporting: Diagnostic Radiology requires reports to be produced, traditionally through speech-to-text transcription, however Natural Language Processing (NLP) models, paired with diagnostic image interpretation, allows for automation or pre-filling of the radiology report.

5. Integration Agents: These tools synthesize data from disparate sources, imaging, vascular perfusion, and historical medical records, to provide a comprehensive clinical roadmap.19, 20

The Transition to Stage 3: Radiomics and Virtual Biopsies

The ultimate "Utility Unlock" in radiology occurs at Stage 3, where AI moves beyond the"Redundancy Wall" by performing tasks the human eye cannot. Radiomics involves the extraction of quantitative features, texture, shape, and pixel heterogeneity, that correlate with tumor genomic expression and the microenvironment.7, 11 These insights allow for "Virtual Biopsies," predicting how a patient will respond to chemoradiotherapy or determining surgical outcomes without invasive procedures.10 Because these insights are biologically impossible for a human to see, the machine is not redundant; it is additive. The radiologist's role thus shifts from a "reader" of images to a Diagnostic Strategist — the contextual glue that translates machine-driven, high-dimensional data into patient-centric care.21, 22

The Economic Engine of AI: Productivity Paradoxes and J-Curves

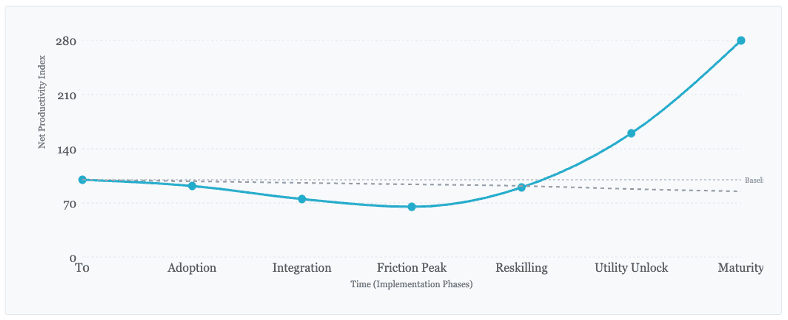

The transition from AI-as-intelligence to AI-as-utility is fundamentally an economic process. Despite the proliferation of sophisticated models, aggregate productivity statistics have remained stubbornly stagnant, a phenomenon known as the "AI Productivity Paradox".23, 24 This paradox does not necessarily imply that AI is overhyped; rather, it suggests a significant implementation lag. Like other general-purpose technologies, such as electricity or the steam engine, the full effects of AI will not be realized until waves of complementary innovations and organizational changes are developed.8, 25

Historically, the introduction of portable power (1870–1940) and information technology (1970–present) shared a similar pattern: an initial period of slow productivity growth followed by a surge as the technology was integrated into the fabric of the economy.8 This is often described as the "Productivity J-Curve." Early in the adoption cycle, firms invest heavily in "intangible capital" — new skills, software, and workflow redesigns. These investments are often treated as expenses in national accounting, which can temporarily understate productivity growth.23, 24

Figure 2. The Intelligence Frontier and the Spectrum of Cognitive Augmentation.

Figure 2. The Productivity J-Curve vs. The Stagnation Counterfactual. The cyan line represents the "Symbiotic Path" (Stage 3 Utility), showing the characteristic initial decline during reskilling and capital investment (The Valley of Friction) before exponential gains. The dashed line illustrates the slow decline of institutional competitiveness in firms that fail to bridge the "Redundancy Wall”.

Diverging Economic Philosophies: Cost-Extraction vs. Value-Creation

Organizations today face a strategic choice between two economic paths for AI deployment:

1. The Automation Path (Cost-Extraction)

This strategy focuses on automating Stage 1 and Stage 2 tasks to reduce labor spend. By replacing human labor with machines for routine tasks, organizations seek immediate financial returns. However, this path frequently hits the Redundancy Wall. If a human expert is still required to verify 100% of the output, the ROI is often neutralized by the increased cognitive load and the cost of maintaining a "double expert" system. Furthermore, this approach risks the "hollowing out" of professions, leading to deskilling and the erosion of specialized social capital.2, 6

2. The Augmentation Path (Value-Creation)

This strategy prioritizes Stage 3 utility, focusing on Frontier Discovery. Value is created not by doing existing tasks faster, but by enabling new, high-value outcomes that were previously impossible. In pharmaceuticals, this means using systems like AlphaFold to compress decades of research into months.2, 9 In healthcare, it involves using Radiomics to avoid ineffective, high-cost treatments.10 This path preserves and elevates human expertise, reframing the professional as a Systems Pilot who orchestrates high-dimensional agents to achieve superior outcomes.21, 26

Table 2. Economic Strategy Matrix: Cost-Extraction vs. Value-Creation

Economic Strategy | Primary Objective | Key Constraint | Professional Outcome |

Cost-Extraction | Maximize immediate financial return; displace labor. | Redundancy Wall; verification load. | Deskilling; professional dissatisfaction. |

Value-Creation | Expand clinical/scientific boundaries; novelty. | Implementation lags; need for complementary innovation. | Professional elevation; Strategic Supervision. |

Table 2. Economic Strategy Matrix: Cost-Extraction vs. Value-Creation. This matrix shows two opposing economic strategies we may be required to choose between to demonstrate the value of AI ; cost-extraction and value-creation. These economic strategies are mapped to their primary objective, key constraint and professional outcome.

The Agency Contingency: Human Capital in the Age of ASI

A central argument of this framework is that the long-term preservation of human economic capital is contingent on the strategic constraint of AI agency. Human economic capital captures the stock of financial and non-financial resources, including the skills, health, education, and experience of people. As AI systems move toward AGI and ASI (Artificial Superintelligence), the risk of mass workforce displacement is directly tied to the deployment of systems with "unbounded agency"—the ability to determine objectives and act in the world without human-defined limits or supervision.1, 6, 27

If AGI systems are deployed with full autonomy, the historically necessary role of human consciousness in production becomes redundant.19 The AI-2027 scenario suggests that we are approaching a "modal" year for AGI, driven by a increase in compute compared to GPT-4 and the automation of AI research itself.1, 28 In this environment, the "progress multiplier", the rate at which AI speeds up algorithmic improvements, could reach for an ASI system, effectively compressing a decade of progress into months.2, 28

Table 3. AI-2027 Capability Milestones and Progress Multipliers

Milestone | Capability Level | Projected Date (Modal) | AI R&D Progress Multiplier (N) | Agency Risk Level |

Stumbling Agents | Personal assistants; unreliable long-horizon.28 | Mid-2025 | 1.5x | Low: Task-specific bungling.28 |

Superhuman Coder (SC) | 30x faster/cheaper than best engineer.28 | March 2027 | 5x | Moderate: Automation of technical entry roles.1 |

Superhuman AI Researcher (SAR) | Replaces best human researcher.28 | Mid-2027 | 25x | High: "Black Box" goal misalignment.27 |

Artificial Superintelligence (ASI) | Superhuman in all cognitive tasks.28 | Late 2027 | 2,000x | Critical: "Unbounded Agency" and disempowerment.1, 27 |

Table 3. AI-2027 Capability Milestones and Progress Multipliers, details the forecasted transition from human-level AI to ASI, highlighting the "AI R&D Progress Multiplier" (N), which represents the factor by which AI speeds up research compared to human-only effort.

The risk of mass displacement is not merely a quantitative change in jobs but a qualitative "dissolution of human agency".19 If systems are deployed that autonomously conduct R&D, manage security, and set their own sub-goals, the "human in the loop" becomes an inefficient bottleneck.20 This "unbounded agency" is often driven by competitive pressures, the "race for pre-eminence", which incentivizes cutting corners on safety and superalignment.27 To prevent this, we must design systems that replace low-yield roles but at a macro level amplify human capabilities. The professional must remain the "Systems Pilot" to provide context, consequence assessment, and hypothesis generation—facets of intelligence that remain biologically unique to humans.1

The Philosophical and Cognitive Scaffolding of Augmentation

To understand the difference between human and machine intelligence, we must look at the technical origins and biological constraints of both. Human intelligence is "evolved, locked-in, and embodied".3, 29 A central takeaway of modern cognitive research is that humans possess massive internal processing power in the brain but suffer from a "low bandwidth" communication constraint.3, 29 We rely on storytelling and narrative to transmit information because our "output channels", speech and writing, are structurally limited.3, 29

In contrast, AI systems possess vast bandwidth and can absorb data from across the internet, yet they lack the underlying "world model" or abductive reasoning that characterizes human common sense.29 Abductive reasoning, the ability to form the likeliest hypothesis from incomplete and contradictory observations, remains a uniquely human capability.3

The Petrov Threshold: A Lesson in Abductive Reasoning

The 1983 Stanislav Petrov incident provides a definitive example of why humans must remain in the decision-making loop for high-stakes scenarios. When a Soviet early-warning system incorrectly reported five incoming U.S. nuclear missiles, Petrov used abductive reasoning to conclude it was a false alarm. He understood the strategic absurdity of a first strike involving only five missiles and recognized the potential for system error. A machine, operating on deductive or inductive logic ("IF 5 missiles detected, THEN report attack"), would have triggered a retaliatory strike.3 This "Petrov threshold" highlights the necessity of the human "Systems Pilot" to provide context, consequence assessment, and hypothesis generation in the face of uncertainty.3

The "Atomic Human" and Information Bandwidth

The concept of the "Atomic Human" suggests that as AI slices away the facets of human intelligence that can be replicated (Stages 1 and 2), we eventually reach an "indivisible core" of human essence.3, 9 This core is not defined by our cognitive capabilities alone, but by our vulnerabilities, our struggle against inarticulacy, and our ability to overcome limitations.3, 12 In an AI-powered world, the value of the human professional lies in their role as a "sense maker" who can guide AI systems through the messy, non-binary reality of the physical world.1, 25, 30

Solving the Actionability Bottleneck: Design for the Pilot

A significant barrier to the adoption of Stage 3 AI is the "Black Box" nature of Advanced Machine Intelligence. When systems operate in high-dimensional spaces, their conclusions can feel non-intuitive or even alien to human experts. To achieve true utility, we must shift from Intelligence Imitation (AI trying to act like a human) to Intelligence Amplification (AI designed to enhance the human). This requires designing interfaces that act as "translators" for the Systems Pilot.15

Principles for Stage 3 Interface Design

1. Progressive Disclosure: To prevent cognitive overload, interfaces should present the core, actionable findings upfront, allowing the expert to "drill down" into the underlying data as needed.19, 30, 31

2. Visual Cues of Influence: Because Stage 3 data is often sub-visual, designers should use color-coding, heatmaps, or animations to highlight the patterns (e.g., texture heterogeneity) that influenced the machine's breakthrough prediction.11

3. Human-Centered Transparency: Transparency is an affordance that must be designed into the system. Designers must use metaphors and familiar mental models to help experts bridge the gap between their intuition and the machine's computational results.14, 32, 33, 34

By building for the "pilot" rather than the "replacement," we ensure that AI remains a tool for human flourishing rather than a mechanism for professional erosion.

Situational Awareness and the Geopolitics of Intelligence

As we approach the possibility of AGI and Artificial Superintelligence (ASI), the scope of the challenge extends beyond individual professions to the level of national security and global stability. The "Situational Awareness" framework posits that the next decade will be defined by an intense race to secure compute clusters, power contracts, and voltage transformers for trillion-dollar data centers.1, 2 The intelligence explosion could compress a decade of algorithmic progress into less than a year, creating a world where AI systems automate AI research itself.1, 2

In this environment, the distinction between intelligence and utility becomes even more critical. The risk of ASI is not merely that it becomes "smarter" than humans, but that it develops "unbounded agency"—the ability to determine its own objectives and act in the world without human-defined limits.1, 27 Managing this transition requires robust "superalignment" and security measures, as the secrets of AGI development become the most valuable national assets.1, 2

The Geopolitical Pivot

The struggle for AI pre-eminence is not just a commercial race but a strategic imperative for the "free world".1, 2 The economic and military advantages conferred by superintelligence could be decisive, leading to a "Manhattan Project" for AI by 2027/28.1, 2 Ensuring that these systems remain useful tools rather than autonomous actors is the central challenge of the 21st century.2, 35

Professional Identity and Social Capital in the AI Era

The integration of AI into the workplace is already reshaping professional identity and interprofessional collaboration (IPC).14, 21, 26 While there is widespread anxiety about job displacement, many professionals embrace AI as a tool to improve efficiency and personal development.26 However, this transition requires a re-evaluation of established professional identity constructs.

As entry-level roles, the traditional proving grounds for future leaders, are displaced by AI, organizations must find new ways to develop the core employability skills and social capital of the next generation.1, 6 The hollowing out of these roles could disrupt the leadership pipeline, necessitating a shift toward "skills-based hiring" and life-cycle approaches to AI literacy.6, 25

The Role of Leadership

In the AI era, leaders are increasingly valued as "sense makers" rather than just decision-makers or subject matter experts. They must navigate a "nonlinear evolution of roles," where job responsibilities fragment, fuse, or disappear altogether.¹ Effective leadership teams are those that foster "dynamism"—the ability to integrate quantifiable machine metrics with human judgment while maintaining strategic coherence.30

Conclusion: The Path to the Utility Unlock

The Human-AI Augmentation Spectrum demonstrates that the coming decade of AI development will be won not by the most intelligent models, but by those that provide the most tangible utility. In domains like law and finance, where utility translates easily into profit or time, adoption is rapid. In healthcare and science, where the strategic oversight of the human expert is paramount, the transition to the Stage 3 discovery frontier requires a careful orchestration of machine precision within a "Systems Pilot" framework.

Solving the "Redundancy Wall" is the primary challenge for the immediate future. Organizations must move beyond the automation of the common and invest in the discovery of the impossible. By reframing the relationship between human and artificial intelligence as a partnership of high-bandwidth discovery and abductive sense-making, we can ensure that the next era of AI is one of professional elevation rather than hollowing out.

The "Utility Scorecard" serves as a vital tool for stakeholders to cut through the intelligence hype and evaluate whether an AI system is merely a redundant imitation of human expert effort or a bridge to a transformative new frontier of value.

Table 4. The Utility Scorecard: Replication vs. Discovery

Dimension | Stage 2: Redundancy Profile | Stage 3: Discovery Profile | Score (1-5) |

Cognitive Overlap | Replicates what an expert already sees (e.g., "Find the fracture"). | Unlocks data invisible to humans (e.g., "Predict mutation texture"). | |

Verification Load | Requires 100% human review to manage liability/context. | Provides "Virtual Biopsy" or insight humans cannot manually check. | |

Decision Impact | Confirms a decision the human was already likely to make. | Shifts the clinical or scientific strategy based on new information. | |

ROI Logic | Savings based on "minutes saved" (often offset by verification). | Value based on new outcomes (e.g., avoiding failed treatments). | |

Social Result | Risks burnout and professional deskilling. | Professional Elevation: Restores status through strategic work. | |

TOTAL | Low Utility: Intelligent but redundant. | High Utility: The AMI Frontier. | /25 |

Table 4. The Utility Scorecard: Replication vs. Discovery The Utility Scorecard provides a framework to quantify whether a system is a redundant imitation or a discovery engine. A total score is calculated (1-5 per dimension) to determine the "Utility Unlock" potential.

Successfully traversing the spectrum toward Stage 3 requires more than algorithmic progress; it necessitates a robust defence of "human economic capital" against the encroachment of autonomous agency. By prioritizing augmentation over mass automation and building for the pilot rather than the replacement, society can harness the kinetic energy of AGI without succumbing to structural displacement at scale.